Research

Our research mainly centers around human modeling and behavior analysis; appearance modeling, inverse rendering, and material recognition; and physics-based vision and computational photography. Be sure to scroll down to the bottom!

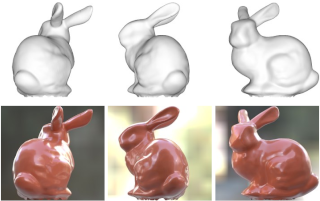

MAtCha Gaussians

Photorealistic Gaussians from sparse views that readily provide high-quality mesh.

Neural Optimizer for Multiview HMR

Occlusion-aware multview human shape and pose recovery as learned optimization.

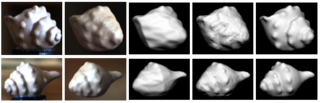

Multistable Shape from Shading

Diffusion-based SFS lets you sample multistable shape perception.

Camera Pose from Reflection

Correspondences in the reflected world enable camera pose recovery without background.

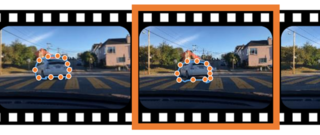

Camera Height Doesn't Change

Camera height invariance enables road-scene metric monocular depth estimation.

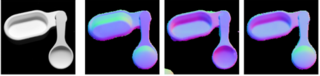

Diffusion Reflectance Map

Single-Image Stochastic Inverse Rendering of Illumination and Reflectance.

Fresnel Microfacet BRDF

THE BRDF model that unifies Polari-Radiometric Surface-Body Reflection.

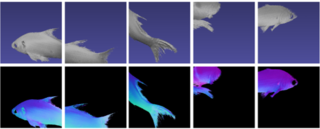

nLMVS-Net : Deep Non-Lambertian Multi-View Stereo

Multi-view stereo for joint shape and reflectance estimation of complex surfaces.

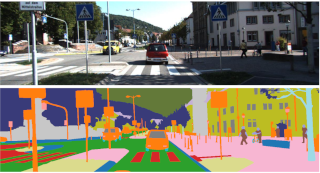

RGB Road Scene Material Segmentation

Road-scene per-pixel material recognition of ordinary RGB images.

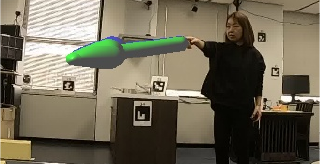

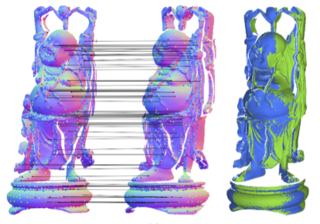

Extrinsic Camera Calibration from A Moving Person

A person is a moving calibration target consisting of easily associative oriented points.

Dynamic 3D Gaze from Afar

Gaze estimation from eye-head-body coordination without relying on the eye appearance.

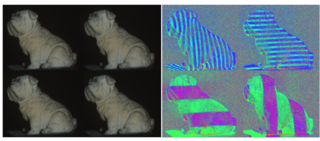

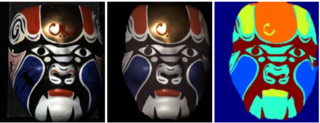

Multimodal Material Segmentation

Road-scene per-pixel material recognition with multimodal imaging.

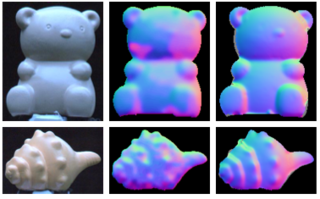

Polarimetric Normal Stereo

Binocular stereo of polarization cameras for fine surface geometry recovery.

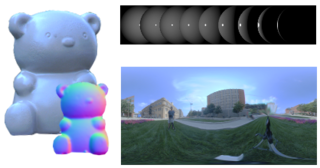

Shape from Sky

The polarization pattern of the sky reflected by the surface gives us per-pixel surface normals.

Consistent 3D Human Shape from Repeatable Action

Clothed human body shape from videos capturing a few instances of a repeatable action.

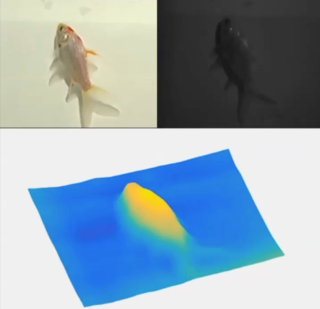

Non-Rigid Shape from Water

Underwater 3D sensing of consistent, dense 3D shape of a dynamic, non-rigid object.

Dense Video Annotation

Dense video annotation only requiring sparse bounding box supervision.

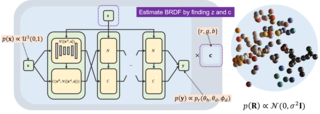

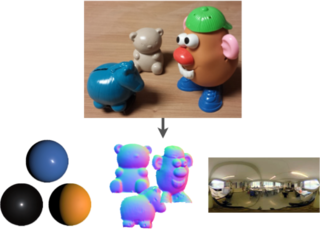

Invertible Neural BRDF for Object Inverse Rendering

BRDF model with conditional normalizing flow for single-image inverse rendering.

Appearance and Shape from Water Reflection

Water reflection lets us recover 3D geometry with HDR appearance from a single image.

Surface Normals and Shape from Water

Per-pixel surface normals and depth of dynamic objects in water using light absorption.

Variable Ring Light Imaging

Transient subsurface light transport from steady-state surface appearance and ordinary imaging.

Wetness and Dry Appearance Recovery

Spectral appearance model of wet surfaces for recovering wetness and dry appearance from a single image.

Shape from Water

Infrared absorption for real-time shape recovery of underwater objects.

Radiometric Scene Decomposition

Inverse rendering the reflectance, illumination, and geometry, of real-world scenes from a few RGB-D images.

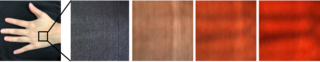

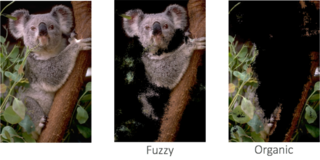

Perceptual Visual Material Attributes

Discovering locally-recognizable material attributes from crowdsourced perceptual material distances.

Visual Material Traits

Representing material categories with their inherent properties exhibited in their looks.

Multiview Shape and Reflectance from Natural Illumination

Single-image inverse rendering of shape and reflectance in a canonical probabilistic formulation.

Shape and Reflectance from Natural Illumination

Single-image inverse rendering of complex reflectance and geometry under natural illumination.

Reflectance and Natural Illumination from a Single Image

Single image inverse rendering of reflectance and natural illumination.

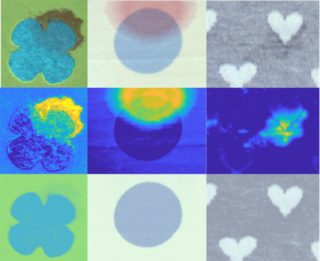

Single Image Multimaterial Estimation

Joint estimation and segmentation of reflectance on an object surface from a single image.

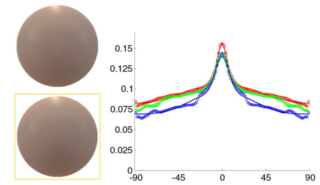

Directional Statistics BRDF Model

A compact yet accurate BRDF reprensentation using directional statistics.

3D Geometric Scale Space

Scale-space representation of surface geometry and scale-dependent/invariant features and descriptors geometry processing.

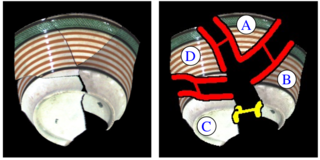

Reassembling Thin Surface Geometry

3D reassembly of fragmented, thin objects based on a novel scale-space representation of contour geometry and photometry.

Tracking People in Crowds

A novel Bayesian framework for tracking pedestrians in videos of crowded scenes with space-time crowd flow model.

Anomaly Detection in Crowds

Modeling and detecting anomalous local spatio-temporal motion pattern behavior of crowded scenes.

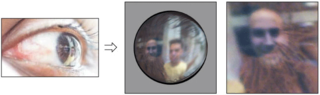

Eyes for Relighting

Extracting the surrounding light from a person’s eye for relighting faces.

The World in An Eye

Eyes can tell us where the person is, what the person is looking at, and even the surrounding lighting and object shape in front of her.